url

stringlengths 58

61

| repository_url

stringclasses 1

value | labels_url

stringlengths 72

75

| comments_url

stringlengths 67

70

| events_url

stringlengths 65

68

| html_url

stringlengths 46

51

| id

int64 599M

2.8B

| node_id

stringlengths 18

32

| number

int64 1

7.38k

| title

stringlengths 1

290

| user

dict | labels

listlengths 0

4

| state

stringclasses 2

values | locked

bool 1

class | assignee

dict | assignees

listlengths 0

4

| milestone

dict | comments

sequencelengths 0

0

| created_at

unknown | updated_at

unknown | closed_at

timestamp[us] | author_association

stringclasses 4

values | sub_issues_summary

dict | active_lock_reason

float64 | body

stringlengths 0

228k

⌀ | closed_by

dict | reactions

dict | timeline_url

stringlengths 67

70

| performed_via_github_app

float64 | state_reason

stringclasses 3

values | draft

float64 0

1

⌀ | pull_request

dict | is_pull_request

bool 2

classes |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

https://api.github.com/repos/huggingface/datasets/issues/7378 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7378/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7378/comments | https://api.github.com/repos/huggingface/datasets/issues/7378/events | https://github.com/huggingface/datasets/issues/7378 | 2,802,957,388 | I_kwDODunzps6nEbxM | 7,378 | Allow pushing config version to hub | {

"avatar_url": "https://avatars.githubusercontent.com/u/129072?v=4",

"events_url": "https://api.github.com/users/momeara/events{/privacy}",

"followers_url": "https://api.github.com/users/momeara/followers",

"following_url": "https://api.github.com/users/momeara/following{/other_user}",

"gists_url": "https://api.github.com/users/momeara/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/momeara",

"id": 129072,

"login": "momeara",

"node_id": "MDQ6VXNlcjEyOTA3Mg==",

"organizations_url": "https://api.github.com/users/momeara/orgs",

"received_events_url": "https://api.github.com/users/momeara/received_events",

"repos_url": "https://api.github.com/users/momeara/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/momeara/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/momeara/subscriptions",

"type": "User",

"url": "https://api.github.com/users/momeara",

"user_view_type": "public"

} | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | open | false | null | [] | null | [] | "2025-01-21T22:35:07" | "2025-01-21T22:35:07" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Feature request

Currently, when datasets are created, they can be versioned by passing the `version` argument to `load_dataset(...)`. For example creating `outcomes.csv` on the command line

```

echo "id,value\n1,0\n2,0\n3,1\n4,1\n" > outcomes.csv

```

and creating it

```

import datasets

dataset = datasets.load_dataset(

"csv",

data_files ="outcomes.csv",

keep_in_memory = True,

version = '1.0.0')

```

The version info is stored in the `info` and can be accessed e.g. by `next(iter(dataset.values())).info.version`

This dataset can be uploaded to the hub with `dataset.push_to_hub(repo_id = "maomlab/example_dataset")`. This will create a dataset on the hub with the following in the `README.md`, but it doesn't upload the version information:

```

---

dataset_info:

features:

- name: id

dtype: int64

- name: value

dtype: int64

splits:

- name: train

num_bytes: 64

num_examples: 4

download_size: 1332

dataset_size: 64

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

---

```

However, when I download from the hub, the version information is missing:

```

dataset_from_hub_no_version = datasets.load_dataset("maomlab/example_dataset")

next(iter(dataset.values())).info.version

```

I can add the version information manually to the hub, by appending it to the end of config section:

```

...

configs:

- config_name: default

data_files:

- split: train

path: data/train-*

version: 1.0.0

---

```

And then when I download it, the version information is correct.

### Motivation

### Why adding version information for each config makes sense

1. The version information is already recorded in the dataset config info data structure and is able to parse it correctly, so it makes sense to sync it with `push_to_hub`.

2. Keeping the version info in at the config level is different from version info at the branch level. As the former relates to the version of the specific dataset the config refers to rather than the version of the dataset curation itself.

## A explanation for the current behavior:

In [datasets/src/datasets/info.py:159](https://github.com/huggingface/datasets/blob/fb91fd3c9ea91a818681a777faf8d0c46f14c680/src/datasets/info.py#L159C1-L160C1

), the `_INCLUDED_INFO_IN_YAML` variable doesn't include `"version"`.

If my reading of the code is right, adding `"version"` to `_INCLUDED_INFO_IN_YAML`, would allow the version information to be uploaded to the hub.

### Your contribution

Request: add `"version"` to `_INCLUDE_INFO_IN_YAML` in [datasets/src/datasets/info.py:159](https://github.com/huggingface/datasets/blob/fb91fd3c9ea91a818681a777faf8d0c46f14c680/src/datasets/info.py#L159C1-L160C1

)

| null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7378/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7378/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7377 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7377/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7377/comments | https://api.github.com/repos/huggingface/datasets/issues/7377/events | https://github.com/huggingface/datasets/issues/7377 | 2,802,723,285 | I_kwDODunzps6nDinV | 7,377 | Support for sparse arrays with the Arrow Sparse Tensor format? | {

"avatar_url": "https://avatars.githubusercontent.com/u/3231217?v=4",

"events_url": "https://api.github.com/users/JulesGM/events{/privacy}",

"followers_url": "https://api.github.com/users/JulesGM/followers",

"following_url": "https://api.github.com/users/JulesGM/following{/other_user}",

"gists_url": "https://api.github.com/users/JulesGM/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/JulesGM",

"id": 3231217,

"login": "JulesGM",

"node_id": "MDQ6VXNlcjMyMzEyMTc=",

"organizations_url": "https://api.github.com/users/JulesGM/orgs",

"received_events_url": "https://api.github.com/users/JulesGM/received_events",

"repos_url": "https://api.github.com/users/JulesGM/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/JulesGM/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/JulesGM/subscriptions",

"type": "User",

"url": "https://api.github.com/users/JulesGM",

"user_view_type": "public"

} | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | open | false | null | [] | null | [] | "2025-01-21T20:14:35" | "2025-01-21T20:17:17" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Feature request

AI in biology is becoming a big thing. One thing that would be a huge benefit to the field that Huggingface Datasets doesn't currently have is native support for **sparse arrays**.

Arrow has support for sparse tensors.

https://arrow.apache.org/docs/format/Other.html#sparse-tensor

It would be a big deal if Hugging Face Datasets supported sparse tensors as a feature type, natively.

### Motivation

This is important for example in the field of transcriptomics (modeling and understanding gene expression), because a large fraction of the genes are not expressed (zero). More generally, in science, sparse arrays are very common, so adding support for them would be very benefitial, it would make just using Hugging Face Dataset objects a lot more straightforward and clean.

### Your contribution

We can discuss this further once the team comments of what they think about the feature, and if there were previous attempts at making it work, and understanding their evaluation of how hard it would be. My intuition is that it should be fairly straightforward, as the Arrow backend already supports it. | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7377/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7377/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7376 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7376/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7376/comments | https://api.github.com/repos/huggingface/datasets/issues/7376/events | https://github.com/huggingface/datasets/pull/7376 | 2,802,621,104 | PR_kwDODunzps6IiO9j | 7,376 | [docs] uv install | {

"avatar_url": "https://avatars.githubusercontent.com/u/59462357?v=4",

"events_url": "https://api.github.com/users/stevhliu/events{/privacy}",

"followers_url": "https://api.github.com/users/stevhliu/followers",

"following_url": "https://api.github.com/users/stevhliu/following{/other_user}",

"gists_url": "https://api.github.com/users/stevhliu/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/stevhliu",

"id": 59462357,

"login": "stevhliu",

"node_id": "MDQ6VXNlcjU5NDYyMzU3",

"organizations_url": "https://api.github.com/users/stevhliu/orgs",

"received_events_url": "https://api.github.com/users/stevhliu/received_events",

"repos_url": "https://api.github.com/users/stevhliu/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/stevhliu/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/stevhliu/subscriptions",

"type": "User",

"url": "https://api.github.com/users/stevhliu",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-21T19:15:48" | "2025-01-21T19:39:29" | 1970-01-01T00:00:00 | MEMBER | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | Proposes adding uv to installation docs (see Slack thread [here](https://huggingface.slack.com/archives/C01N44FJDHT/p1737377177709279) for more context) if you're interested! | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7376/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7376/timeline | null | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/7376.diff",

"html_url": "https://github.com/huggingface/datasets/pull/7376",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/7376.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7376"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/7375 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7375/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7375/comments | https://api.github.com/repos/huggingface/datasets/issues/7375/events | https://github.com/huggingface/datasets/issues/7375 | 2,800,609,218 | I_kwDODunzps6m7efC | 7,375 | vllm批量推理报错 | {

"avatar_url": "https://avatars.githubusercontent.com/u/51228154?v=4",

"events_url": "https://api.github.com/users/YuShengzuishuai/events{/privacy}",

"followers_url": "https://api.github.com/users/YuShengzuishuai/followers",

"following_url": "https://api.github.com/users/YuShengzuishuai/following{/other_user}",

"gists_url": "https://api.github.com/users/YuShengzuishuai/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/YuShengzuishuai",

"id": 51228154,

"login": "YuShengzuishuai",

"node_id": "MDQ6VXNlcjUxMjI4MTU0",

"organizations_url": "https://api.github.com/users/YuShengzuishuai/orgs",

"received_events_url": "https://api.github.com/users/YuShengzuishuai/received_events",

"repos_url": "https://api.github.com/users/YuShengzuishuai/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/YuShengzuishuai/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/YuShengzuishuai/subscriptions",

"type": "User",

"url": "https://api.github.com/users/YuShengzuishuai",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-21T03:22:23" | "2025-01-21T03:22:23" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

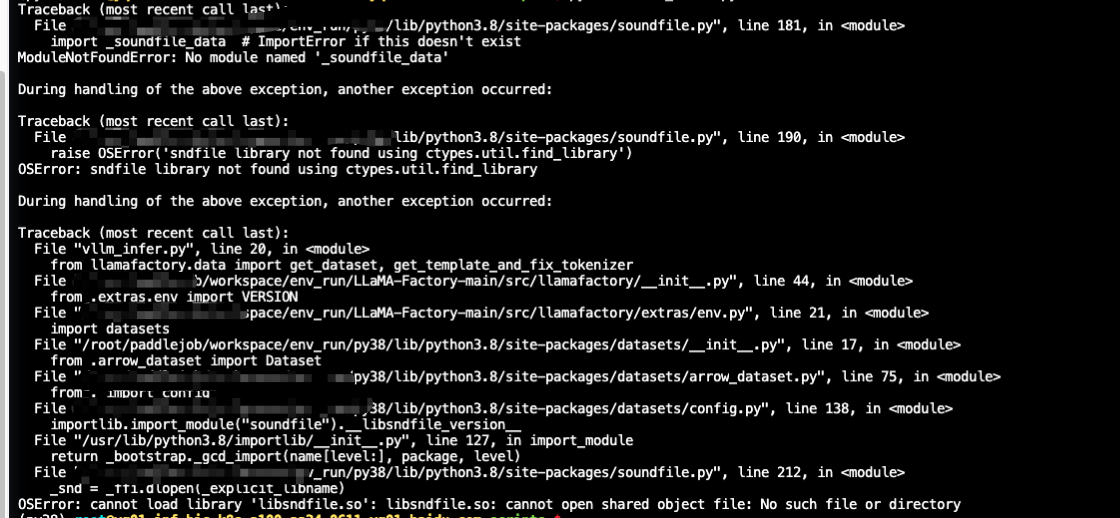

} | null | ### Describe the bug

### Steps to reproduce the bug

### Expected behavior

### Environment info

| null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7375/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7375/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7374 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7374/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7374/comments | https://api.github.com/repos/huggingface/datasets/issues/7374/events | https://github.com/huggingface/datasets/pull/7374 | 2,793,442,320 | PR_kwDODunzps6IC66n | 7,374 | Remove .h5 from imagefolder extensions | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq",

"user_view_type": "public"

} | [] | closed | false | null | [] | null | [] | "2025-01-16T18:17:24" | "2025-01-16T18:26:40" | 2025-01-16T18:26:38 | MEMBER | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | the format is not relevant for imagefolder, and makes the viewer fail to process datasets on HF (so many that the viewer takes more time to process new datasets) | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7374/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7374/timeline | null | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/7374.diff",

"html_url": "https://github.com/huggingface/datasets/pull/7374",

"merged_at": "2025-01-16T18:26:38Z",

"patch_url": "https://github.com/huggingface/datasets/pull/7374.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7374"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/7373 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7373/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7373/comments | https://api.github.com/repos/huggingface/datasets/issues/7373/events | https://github.com/huggingface/datasets/issues/7373 | 2,793,237,139 | I_kwDODunzps6mfWqT | 7,373 | Excessive RAM Usage After Dataset Concatenation concatenate_datasets | {

"avatar_url": "https://avatars.githubusercontent.com/u/40773225?v=4",

"events_url": "https://api.github.com/users/sam-hey/events{/privacy}",

"followers_url": "https://api.github.com/users/sam-hey/followers",

"following_url": "https://api.github.com/users/sam-hey/following{/other_user}",

"gists_url": "https://api.github.com/users/sam-hey/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/sam-hey",

"id": 40773225,

"login": "sam-hey",

"node_id": "MDQ6VXNlcjQwNzczMjI1",

"organizations_url": "https://api.github.com/users/sam-hey/orgs",

"received_events_url": "https://api.github.com/users/sam-hey/received_events",

"repos_url": "https://api.github.com/users/sam-hey/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/sam-hey/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/sam-hey/subscriptions",

"type": "User",

"url": "https://api.github.com/users/sam-hey",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-16T16:33:10" | "2025-01-17T08:05:22" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

When loading a dataset from disk, concatenating it, and starting the training process, the RAM usage progressively increases until the kernel terminates the process due to excessive memory consumption.

https://github.com/huggingface/datasets/issues/2276

### Steps to reproduce the bug

```

rom datasets import DatasetDict, concatenate_datasets

dataset = DatasetDict.load_from_disk("data")

...

...

combined_dataset = concatenate_datasets(

[dataset[split] for split in dataset]

)

#start SentenceTransformer training

```

### Expected behavior

I would not expect RAM utilization to increase after concatenation. Removing the concatenation step resolves the issue

### Environment info

sentence-transformers==3.1.1

datasets==3.2.0

python3.10 | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7373/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7373/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7372 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7372/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7372/comments | https://api.github.com/repos/huggingface/datasets/issues/7372/events | https://github.com/huggingface/datasets/issues/7372 | 2,791,760,968 | I_kwDODunzps6mZuRI | 7,372 | Inconsistent Behavior Between `load_dataset` and `load_from_disk` When Loading Sharded Datasets | {

"avatar_url": "https://avatars.githubusercontent.com/u/38203359?v=4",

"events_url": "https://api.github.com/users/gaohongkui/events{/privacy}",

"followers_url": "https://api.github.com/users/gaohongkui/followers",

"following_url": "https://api.github.com/users/gaohongkui/following{/other_user}",

"gists_url": "https://api.github.com/users/gaohongkui/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/gaohongkui",

"id": 38203359,

"login": "gaohongkui",

"node_id": "MDQ6VXNlcjM4MjAzMzU5",

"organizations_url": "https://api.github.com/users/gaohongkui/orgs",

"received_events_url": "https://api.github.com/users/gaohongkui/received_events",

"repos_url": "https://api.github.com/users/gaohongkui/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/gaohongkui/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/gaohongkui/subscriptions",

"type": "User",

"url": "https://api.github.com/users/gaohongkui",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-16T05:47:20" | "2025-01-16T05:47:20" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Description

I encountered an inconsistency in behavior between `load_dataset` and `load_from_disk` when loading sharded datasets. Here is a minimal example to reproduce the issue:

#### Code 1: Using `load_dataset`

```python

from datasets import Dataset, load_dataset

# First save with max_shard_size=10

Dataset.from_dict({"id": range(1000)}).train_test_split(test_size=0.1).save_to_disk("my_sharded_datasetdict", max_shard_size=10)

# Second save with max_shard_size=10

Dataset.from_dict({"id": range(500)}).train_test_split(test_size=0.1).save_to_disk("my_sharded_datasetdict", max_shard_size=10)

# Load the DatasetDict

loaded_datasetdict = load_dataset("my_sharded_datasetdict")

print(loaded_datasetdict)

```

**Output**:

- `train` has 1350 samples.

- `test` has 150 samples.

#### Code 2: Using `load_from_disk`

```python

from datasets import Dataset, load_from_disk

# First save with max_shard_size=10

Dataset.from_dict({"id": range(1000)}).train_test_split(test_size=0.1).save_to_disk("my_sharded_datasetdict", max_shard_size=10)

# Second save with max_shard_size=10

Dataset.from_dict({"id": range(500)}).train_test_split(test_size=0.1).save_to_disk("my_sharded_datasetdict", max_shard_size=10)

# Load the DatasetDict

loaded_datasetdict = load_from_disk("my_sharded_datasetdict")

print(loaded_datasetdict)

```

**Output**:

- `train` has 450 samples.

- `test` has 50 samples.

### Expected Behavior

I expected both `load_dataset` and `load_from_disk` to load the same dataset, as they are pointing to the same directory. However, the results differ significantly:

- `load_dataset` seems to merge all shards, resulting in a combined dataset.

- `load_from_disk` only loads the last saved dataset, ignoring previous shards.

### Questions

1. Is this behavior intentional? If so, could you clarify the difference between `load_dataset` and `load_from_disk` in the documentation?

2. If this is not intentional, could this be considered a bug?

3. What is the recommended way to handle cases where multiple datasets are saved to the same directory?

Thank you for your time and effort in maintaining this great library! I look forward to your feedback. | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7372/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7372/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7371 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7371/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7371/comments | https://api.github.com/repos/huggingface/datasets/issues/7371/events | https://github.com/huggingface/datasets/issues/7371 | 2,790,549,889 | I_kwDODunzps6mVGmB | 7,371 | 500 Server error with pushing a dataset | {

"avatar_url": "https://avatars.githubusercontent.com/u/7677814?v=4",

"events_url": "https://api.github.com/users/martinmatak/events{/privacy}",

"followers_url": "https://api.github.com/users/martinmatak/followers",

"following_url": "https://api.github.com/users/martinmatak/following{/other_user}",

"gists_url": "https://api.github.com/users/martinmatak/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/martinmatak",

"id": 7677814,

"login": "martinmatak",

"node_id": "MDQ6VXNlcjc2Nzc4MTQ=",

"organizations_url": "https://api.github.com/users/martinmatak/orgs",

"received_events_url": "https://api.github.com/users/martinmatak/received_events",

"repos_url": "https://api.github.com/users/martinmatak/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/martinmatak/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/martinmatak/subscriptions",

"type": "User",

"url": "https://api.github.com/users/martinmatak",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-15T18:23:02" | "2025-01-15T20:06:05" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

Suddenly, I started getting this error message saying it was an internal error.

`Error creating/pushing dataset: 500 Server Error: Internal Server Error for url: https://huggingface.co./api/datasets/ll4ma-lab/grasp-dataset/commit/main (Request ID: Root=1-6787f0b7-66d5bd45413e481c4c2fb22d;670d04ff-65f5-4741-a353-2eacc47a3928)

Internal Error - We're working hard to fix this as soon as possible!

Traceback (most recent call last):

File "/uufs/chpc.utah.edu/common/home/hermans-group1/martin/software/pkg/miniforge3/envs/myenv2/lib/python3.10/site-packages/huggingface_hub/utils/_http.py", line 406, in hf_raise_for_status

response.raise_for_status()

File "/uufs/chpc.utah.edu/common/home/hermans-group1/martin/software/pkg/miniforge3/envs/myenv2/lib/python3.10/site-packages/requests/models.py", line 1024, in raise_for_status

raise HTTPError(http_error_msg, response=self)

requests.exceptions.HTTPError: 500 Server Error: Internal Server Error for url: https://huggingface.co./api/datasets/ll4ma-lab/grasp-dataset/commit/main

The above exception was the direct cause of the following exception:

Traceback (most recent call last):

File "/uufs/chpc.utah.edu/common/home/u1295595/grasp_dataset_converter/src/grasp_dataset_converter/main.py", line 142, in main

subset_train.push_to_hub(dataset_name, split='train')

File "/uufs/chpc.utah.edu/common/home/hermans-group1/martin/software/pkg/miniforge3/envs/myenv2/lib/python3.10/site-packages/datasets/arrow_dataset.py", line 5624, in push_to_hub

commit_info = api.create_commit(

File "/uufs/chpc.utah.edu/common/home/hermans-group1/martin/software/pkg/miniforge3/envs/myenv2/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py", line 114, in _inner_fn

return fn(*args, **kwargs)

File "/uufs/chpc.utah.edu/common/home/hermans-group1/martin/software/pkg/miniforge3/envs/myenv2/lib/python3.10/site-packages/huggingface_hub/hf_api.py", line 1518, in _inner

return fn(self, *args, **kwargs)

File "/uufs/chpc.utah.edu/common/home/hermans-group1/martin/software/pkg/miniforge3/envs/myenv2/lib/python3.10/site-packages/huggingface_hub/hf_api.py", line 4087, in create_commit

hf_raise_for_status(commit_resp, endpoint_name="commit")

File "/uufs/chpc.utah.edu/common/home/hermans-group1/martin/software/pkg/miniforge3/envs/myenv2/lib/python3.10/site-packages/huggingface_hub/utils/_http.py", line 477, in hf_raise_for_status

raise _format(HfHubHTTPError, str(e), response) from e

huggingface_hub.errors.HfHubHTTPError: 500 Server Error: Internal Server Error for url: https://huggingface.co./api/datasets/ll4ma-lab/grasp-dataset/commit/main (Request ID: Root=1-6787f0b7-66d5bd45413e481c4c2fb22d;670d04ff-65f5-4741-a353-2eacc47a3928)

Internal Error - We're working hard to fix this as soon as possible!`

### Steps to reproduce the bug

I am pushing a Dataset in a loop via push_to_hub API

### Expected behavior

It worked fine until it stopped working suddenly.

Expected behavior: It should start working again

### Environment info

- `datasets` version: 3.2.0

- Platform: Linux-4.18.0-477.15.1.el8_8.x86_64-x86_64-with-glibc2.28

- Python version: 3.10.0

- `huggingface_hub` version: 0.27.1

- PyArrow version: 18.1.0

- Pandas version: 2.2.3

- `fsspec` version: 2024.9.0

| null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7371/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7371/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7370 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7370/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7370/comments | https://api.github.com/repos/huggingface/datasets/issues/7370/events | https://github.com/huggingface/datasets/pull/7370 | 2,787,972,786 | PR_kwDODunzps6HwAu7 | 7,370 | Support faster processing using pandas or polars functions in `IterableDataset.map()` | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-14T18:14:13" | "2025-01-14T18:30:13" | 1970-01-01T00:00:00 | MEMBER | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | Allow super fast processing using pandas or polars functions in `IterableDataset.map()` by adding support to pandas and polars formatting in `IterableDataset`

```python

import polars as pl

from datasets import Dataset

ds = Dataset.from_dict({"i": range(10)}).to_iterable_dataset()

ds = ds.with_format("polars")

ds = ds.map(lambda df: df.with_columns(pl.col("i"), pl.col("i").add(1).alias("i+1")), batched=True)

ds = ds.with_format(None)

print(next(iter(ds)))

# {'i': 0, 'i+1': 1}

```

It leverages arrow's zero-copy features from/to pandas and polars.

related to https://github.com/huggingface/datasets/issues/3444 #6762 | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7370/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7370/timeline | null | null | 1 | {

"diff_url": "https://github.com/huggingface/datasets/pull/7370.diff",

"html_url": "https://github.com/huggingface/datasets/pull/7370",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/7370.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7370"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/7369 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7369/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7369/comments | https://api.github.com/repos/huggingface/datasets/issues/7369/events | https://github.com/huggingface/datasets/issues/7369 | 2,787,193,238 | I_kwDODunzps6mITGW | 7,369 | Importing dataset gives unhelpful error message when filenames in metadata.csv are not found in the directory | {

"avatar_url": "https://avatars.githubusercontent.com/u/38278139?v=4",

"events_url": "https://api.github.com/users/svencornetsdegroot/events{/privacy}",

"followers_url": "https://api.github.com/users/svencornetsdegroot/followers",

"following_url": "https://api.github.com/users/svencornetsdegroot/following{/other_user}",

"gists_url": "https://api.github.com/users/svencornetsdegroot/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/svencornetsdegroot",

"id": 38278139,

"login": "svencornetsdegroot",

"node_id": "MDQ6VXNlcjM4Mjc4MTM5",

"organizations_url": "https://api.github.com/users/svencornetsdegroot/orgs",

"received_events_url": "https://api.github.com/users/svencornetsdegroot/received_events",

"repos_url": "https://api.github.com/users/svencornetsdegroot/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/svencornetsdegroot/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/svencornetsdegroot/subscriptions",

"type": "User",

"url": "https://api.github.com/users/svencornetsdegroot",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-14T13:53:21" | "2025-01-14T15:05:51" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

While importing an audiofolder dataset, where the names of the audiofiles don't correspond to the filenames in the metadata.csv, we get an unclear error message that is not helpful for the debugging, i.e.

```

ValueError: Instruction "train" corresponds to no data!

```

### Steps to reproduce the bug

Assume an audiofolder with audiofiles, filename1.mp3, filename2.mp3 etc and a file metadata.csv which contains the columns file_name and sentence. The file_names are formatted like filename1.mp3, filename2.mp3 etc.

Load the audio

```

from datasets import load_dataset

load_dataset("audiofolder", data_dir='/path/to/audiofolder')

```

When the file_names in the csv are not in sync with the filenames in the audiofolder, then we get an Error message:

```

File /opt/conda/lib/python3.12/site-packages/datasets/arrow_reader.py:251, in BaseReader.read(self, name, instructions, split_infos, in_memory)

249 if not files:

250 msg = f'Instruction "{instructions}" corresponds to no data!'

--> 251 raise ValueError(msg)

252 return self.read_files(files=files, original_instructions=instructions, in_memory=in_memory)

ValueError: Instruction "train" corresponds to no data!

```

load_dataset has a default value for the argument split = 'train'.

### Expected behavior

It would be better to get an error report something like:

```

The metadata.csv file has different filenames than the files in the datadirectory.

```

It would have saved me 4 hours of debugging.

### Environment info

- `datasets` version: 3.2.0

- Platform: Linux-5.14.0-427.40.1.el9_4.x86_64-x86_64-with-glibc2.39

- Python version: 3.12.8

- `huggingface_hub` version: 0.27.0

- PyArrow version: 18.1.0

- Pandas version: 2.2.3

- `fsspec` version: 2024.9.0 | null | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7369/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7369/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7368 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7368/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7368/comments | https://api.github.com/repos/huggingface/datasets/issues/7368/events | https://github.com/huggingface/datasets/pull/7368 | 2,784,272,477 | PR_kwDODunzps6HjE97 | 7,368 | Add with_split to DatasetDict.map | {

"avatar_url": "https://avatars.githubusercontent.com/u/93233241?v=4",

"events_url": "https://api.github.com/users/jp1924/events{/privacy}",

"followers_url": "https://api.github.com/users/jp1924/followers",

"following_url": "https://api.github.com/users/jp1924/following{/other_user}",

"gists_url": "https://api.github.com/users/jp1924/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/jp1924",

"id": 93233241,

"login": "jp1924",

"node_id": "U_kgDOBY6gWQ",

"organizations_url": "https://api.github.com/users/jp1924/orgs",

"received_events_url": "https://api.github.com/users/jp1924/received_events",

"repos_url": "https://api.github.com/users/jp1924/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/jp1924/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jp1924/subscriptions",

"type": "User",

"url": "https://api.github.com/users/jp1924",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-13T15:09:56" | "2025-01-22T02:32:11" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | #7356 | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7368/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7368/timeline | null | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/7368.diff",

"html_url": "https://github.com/huggingface/datasets/pull/7368",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/7368.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7368"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/7366 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7366/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7366/comments | https://api.github.com/repos/huggingface/datasets/issues/7366/events | https://github.com/huggingface/datasets/issues/7366 | 2,781,522,894 | I_kwDODunzps6lyqvO | 7,366 | Dataset.from_dict() can't handle large dict | {

"avatar_url": "https://avatars.githubusercontent.com/u/164967134?v=4",

"events_url": "https://api.github.com/users/CSU-OSS/events{/privacy}",

"followers_url": "https://api.github.com/users/CSU-OSS/followers",

"following_url": "https://api.github.com/users/CSU-OSS/following{/other_user}",

"gists_url": "https://api.github.com/users/CSU-OSS/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/CSU-OSS",

"id": 164967134,

"login": "CSU-OSS",

"node_id": "U_kgDOCdUy3g",

"organizations_url": "https://api.github.com/users/CSU-OSS/orgs",

"received_events_url": "https://api.github.com/users/CSU-OSS/received_events",

"repos_url": "https://api.github.com/users/CSU-OSS/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/CSU-OSS/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/CSU-OSS/subscriptions",

"type": "User",

"url": "https://api.github.com/users/CSU-OSS",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-11T02:05:21" | "2025-01-11T02:05:21" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

I have 26,000,000 3-tuples. When I use Dataset.from_dict() to load, neither. py nor Jupiter notebook can run successfully. This is my code:

```

# len(example_data) is 26,000,000, 'diff' is a text

diff1_list = [example_data[i].texts[0] for i in range(len(example_data))]

diff2_list = [example_data[i].texts[1] for i in range(len(example_data))]

label_list = [example_data[i].label for i in range(len(example_data))]

embedding_dataset = Dataset.from_dict({

"diff1": diff1_list,

"diff2": diff2_list,

"label": label_list

})

```

### Steps to reproduce the bug

1. Initialize a large 3-tuple, e.g. 26,000,000

2. Use Dataset.from_dict() to load

### Expected behavior

Dataset.from_dict() run successfully

### Environment info

sentence-transformers 3.3.1 | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7366/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7366/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7365 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7365/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7365/comments | https://api.github.com/repos/huggingface/datasets/issues/7365/events | https://github.com/huggingface/datasets/issues/7365 | 2,780,216,199 | I_kwDODunzps6ltruH | 7,365 | A parameter is specified but not used in datasets.arrow_dataset.Dataset.from_pandas() | {

"avatar_url": "https://avatars.githubusercontent.com/u/69003192?v=4",

"events_url": "https://api.github.com/users/NourOM02/events{/privacy}",

"followers_url": "https://api.github.com/users/NourOM02/followers",

"following_url": "https://api.github.com/users/NourOM02/following{/other_user}",

"gists_url": "https://api.github.com/users/NourOM02/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/NourOM02",

"id": 69003192,

"login": "NourOM02",

"node_id": "MDQ6VXNlcjY5MDAzMTky",

"organizations_url": "https://api.github.com/users/NourOM02/orgs",

"received_events_url": "https://api.github.com/users/NourOM02/received_events",

"repos_url": "https://api.github.com/users/NourOM02/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/NourOM02/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/NourOM02/subscriptions",

"type": "User",

"url": "https://api.github.com/users/NourOM02",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-10T13:39:33" | "2025-01-10T13:39:33" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

I am interested in creating train, test and eval splits from a pandas Dataframe, therefore I was looking at the possibilities I can follow. I noticed the split parameter and was hopeful to use it in order to generate the 3 at once, however, while trying to understand the code, i noticed that it has no added value (correct me if I am wrong or misunderstood the code).

from_pandas function code :

```python

if info is not None and features is not None and info.features != features:

raise ValueError(

f"Features specified in `features` and `info.features` can't be different:\n{features}\n{info.features}"

)

features = features if features is not None else info.features if info is not None else None

if info is None:

info = DatasetInfo()

info.features = features

table = InMemoryTable.from_pandas(

df=df,

preserve_index=preserve_index,

)

if features is not None:

# more expensive cast than InMemoryTable.from_pandas(..., schema=features.arrow_schema)

# needed to support the str to Audio conversion for instance

table = table.cast(features.arrow_schema)

return cls(table, info=info, split=split)

```

### Steps to reproduce the bug

```python

from datasets import Dataset

# Filling the split parameter with whatever causes no harm at all

data = Dataset.from_pandas(self.raw_data, split='egiojegoierjgoiejgrefiergiuorenvuirgurthgi')

```

### Expected behavior

Would be great if there is no split parameter (if it isn't working), or to add a concrete example of how it can be used.

### Environment info

- `datasets` version: 3.2.0

- Platform: Linux-5.15.0-127-generic-x86_64-with-glibc2.35

- Python version: 3.10.12

- `huggingface_hub` version: 0.27.1

- PyArrow version: 18.1.0

- Pandas version: 2.2.3

- `fsspec` version: 2024.9.0 | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7365/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7365/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7364 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7364/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7364/comments | https://api.github.com/repos/huggingface/datasets/issues/7364/events | https://github.com/huggingface/datasets/issues/7364 | 2,776,929,268 | I_kwDODunzps6lhJP0 | 7,364 | API endpoints for gated dataset access requests | {

"avatar_url": "https://avatars.githubusercontent.com/u/6140840?v=4",

"events_url": "https://api.github.com/users/jerome-white/events{/privacy}",

"followers_url": "https://api.github.com/users/jerome-white/followers",

"following_url": "https://api.github.com/users/jerome-white/following{/other_user}",

"gists_url": "https://api.github.com/users/jerome-white/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/jerome-white",

"id": 6140840,

"login": "jerome-white",

"node_id": "MDQ6VXNlcjYxNDA4NDA=",

"organizations_url": "https://api.github.com/users/jerome-white/orgs",

"received_events_url": "https://api.github.com/users/jerome-white/received_events",

"repos_url": "https://api.github.com/users/jerome-white/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/jerome-white/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jerome-white/subscriptions",

"type": "User",

"url": "https://api.github.com/users/jerome-white",

"user_view_type": "public"

} | [

{

"color": "a2eeef",

"default": true,

"description": "New feature or request",

"id": 1935892871,

"name": "enhancement",

"node_id": "MDU6TGFiZWwxOTM1ODkyODcx",

"url": "https://api.github.com/repos/huggingface/datasets/labels/enhancement"

}

] | closed | false | null | [] | null | [] | "2025-01-09T06:21:20" | "2025-01-09T11:17:40" | 2025-01-09T11:17:20 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Feature request

I would like a programatic way of requesting access to gated datasets. The current solution to gain access forces me to visit a website and physically click an "agreement" button (as per the [documentation](https://huggingface.co./docs/hub/en/datasets-gated#access-gated-datasets-as-a-user)).

An ideal approach would be HF API download methods that negotiate access on my behalf based on information from my CLI login and/or token. I realise that may be naive given the various types of access semantics available to dataset authors (automatic versus manual approval, for example) and complexities it might add to existing methods, but something along those lines would be nice.

Perhaps using the `*_access_request` methods available to dataset authors can be a precedent; see [`reject_access_request`](https://huggingface.co./docs/huggingface_hub/main/en/package_reference/hf_api#huggingface_hub.HfApi.reject_access_request) for example.

### Motivation

When trying to download files from a gated dataset, I'm met with a `GatedRepoError` and instructed to visit the repository's website to gain access:

```

Cannot access gated repo for url https://huggingface.co./datasets/open-llm-leaderboard/meta-llama__Meta-Llama-3.1-70B-Instruct-details/resolve/main/meta-llama__Meta-Llama-3.1-70B-Instruct/samples_leaderboard_math_precalculus_hard_2024-07-19T18-47-29.522341.jsonl.

Access to dataset open-llm-leaderboard/meta-llama__Meta-Llama-3.1-70B-Instruct-details is restricted and you are not in the authorized list. Visit https://huggingface.co./datasets/open-llm-leaderboard/meta-llama__Meta-Llama-3.1-70B-Instruct-details to ask for access.

```

This makes task automation extremely difficult. For example, I'm interested in studying sample-level responses of models on the LLM leaderboard -- how they answered particular questions on a given evaluation framework. As I come across more and more participants that gate their data, it's becoming unwieldy to continue my work (there over 2,000 participants, so in the worst case that's the number of website visits I'd need to manually undertake).

One approach is use Selenium to react to the `GatedRepoError`, but that seems like overkill; and a potential violation HF terms of service (?).

As mentioned in the previous section, there seems to be an [API for gated dataset owners](https://huggingface.co./docs/hub/en/datasets-gated#via-the-api) to managed access requests, and thus some appetite for allowing automated management of gating. This feature request is to extend that to dataset users.

### Your contribution

Whether I can help depends on a few things; one being the complexity of the underlying gated access design. If this feature request is accepted I am open to being involved in discussions and testing, and even development under the right time-outcome tradeoff. | {

"avatar_url": "https://avatars.githubusercontent.com/u/6140840?v=4",

"events_url": "https://api.github.com/users/jerome-white/events{/privacy}",

"followers_url": "https://api.github.com/users/jerome-white/followers",

"following_url": "https://api.github.com/users/jerome-white/following{/other_user}",

"gists_url": "https://api.github.com/users/jerome-white/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/jerome-white",

"id": 6140840,

"login": "jerome-white",

"node_id": "MDQ6VXNlcjYxNDA4NDA=",

"organizations_url": "https://api.github.com/users/jerome-white/orgs",

"received_events_url": "https://api.github.com/users/jerome-white/received_events",

"repos_url": "https://api.github.com/users/jerome-white/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/jerome-white/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jerome-white/subscriptions",

"type": "User",

"url": "https://api.github.com/users/jerome-white",

"user_view_type": "public"

} | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7364/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7364/timeline | null | not_planned | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7363 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7363/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7363/comments | https://api.github.com/repos/huggingface/datasets/issues/7363/events | https://github.com/huggingface/datasets/issues/7363 | 2,774,090,012 | I_kwDODunzps6lWUEc | 7,363 | ImportError: To support decoding images, please install 'Pillow'. | {

"avatar_url": "https://avatars.githubusercontent.com/u/1394644?v=4",

"events_url": "https://api.github.com/users/jamessdixon/events{/privacy}",

"followers_url": "https://api.github.com/users/jamessdixon/followers",

"following_url": "https://api.github.com/users/jamessdixon/following{/other_user}",

"gists_url": "https://api.github.com/users/jamessdixon/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/jamessdixon",

"id": 1394644,

"login": "jamessdixon",

"node_id": "MDQ6VXNlcjEzOTQ2NDQ=",

"organizations_url": "https://api.github.com/users/jamessdixon/orgs",

"received_events_url": "https://api.github.com/users/jamessdixon/received_events",

"repos_url": "https://api.github.com/users/jamessdixon/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/jamessdixon/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/jamessdixon/subscriptions",

"type": "User",

"url": "https://api.github.com/users/jamessdixon",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-08T02:22:57" | "2025-01-16T08:54:47" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

Following this tutorial locally using a macboko and VSCode: https://huggingface.co./docs/diffusers/en/tutorials/basic_training

This line of code: for i, image in enumerate(dataset[:4]["image"]):

throws: ImportError: To support decoding images, please install 'Pillow'.

Pillow is installed.

### Steps to reproduce the bug

Run the tutorial

### Expected behavior

Images should be rendered

### Environment info

MacBook, VSCode | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7363/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7363/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7362 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7362/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7362/comments | https://api.github.com/repos/huggingface/datasets/issues/7362/events | https://github.com/huggingface/datasets/issues/7362 | 2,773,731,829 | I_kwDODunzps6lU8n1 | 7,362 | HuggingFace CLI dataset download raises error | {

"avatar_url": "https://avatars.githubusercontent.com/u/3870355?v=4",

"events_url": "https://api.github.com/users/ajayvohra2005/events{/privacy}",

"followers_url": "https://api.github.com/users/ajayvohra2005/followers",

"following_url": "https://api.github.com/users/ajayvohra2005/following{/other_user}",

"gists_url": "https://api.github.com/users/ajayvohra2005/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/ajayvohra2005",

"id": 3870355,

"login": "ajayvohra2005",

"node_id": "MDQ6VXNlcjM4NzAzNTU=",

"organizations_url": "https://api.github.com/users/ajayvohra2005/orgs",

"received_events_url": "https://api.github.com/users/ajayvohra2005/received_events",

"repos_url": "https://api.github.com/users/ajayvohra2005/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/ajayvohra2005/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/ajayvohra2005/subscriptions",

"type": "User",

"url": "https://api.github.com/users/ajayvohra2005",

"user_view_type": "public"

} | [] | closed | false | null | [] | null | [] | "2025-01-07T21:03:30" | "2025-01-08T15:00:37" | 2025-01-08T14:35:52 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

Trying to download Hugging Face datasets using Hugging Face CLI raises error. This error only started after December 27th, 2024. For example:

```

huggingface-cli download --repo-type dataset gboleda/wikicorpus

Traceback (most recent call last):

File "/home/ubuntu/test_venv/bin/huggingface-cli", line 8, in <module>

sys.exit(main())

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/commands/huggingface_cli.py", line 51, in main

service.run()

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/commands/download.py", line 146, in run

print(self._download()) # Print path to downloaded files

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/commands/download.py", line 180, in _download

return snapshot_download(

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py", line 114, in _inner_fn

return fn(*args, **kwargs)

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/_snapshot_download.py", line 164, in snapshot_download

repo_info = api.repo_info(repo_id=repo_id, repo_type=repo_type, revision=revision, token=token)

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py", line 114, in _inner_fn

return fn(*args, **kwargs)

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/hf_api.py", line 2491, in repo_info

return method(

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/utils/_validators.py", line 114, in _inner_fn

return fn(*args, **kwargs)

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/hf_api.py", line 2366, in dataset_info

return DatasetInfo(**data)

File "/home/ubuntu/test_venv/lib/python3.10/site-packages/huggingface_hub/hf_api.py", line 799, in __init__

self.tags = kwargs.pop("tags")

KeyError: 'tags'

```

### Steps to reproduce the bug

```

1. huggingface-cli download --repo-type dataset gboleda/wikicorpus

```

### Expected behavior

There should be no error.

### Environment info

- `datasets` version: 2.19.1

- Platform: Linux-6.8.0-1015-aws-x86_64-with-glibc2.35

- Python version: 3.10.12

- `huggingface_hub` version: 0.23.5

- PyArrow version: 18.1.0

- Pandas version: 2.2.3

- `fsspec` version: 2024.3.1 | {

"avatar_url": "https://avatars.githubusercontent.com/u/42851186?v=4",

"events_url": "https://api.github.com/users/lhoestq/events{/privacy}",

"followers_url": "https://api.github.com/users/lhoestq/followers",

"following_url": "https://api.github.com/users/lhoestq/following{/other_user}",

"gists_url": "https://api.github.com/users/lhoestq/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/lhoestq",

"id": 42851186,

"login": "lhoestq",

"node_id": "MDQ6VXNlcjQyODUxMTg2",

"organizations_url": "https://api.github.com/users/lhoestq/orgs",

"received_events_url": "https://api.github.com/users/lhoestq/received_events",

"repos_url": "https://api.github.com/users/lhoestq/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/lhoestq/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/lhoestq/subscriptions",

"type": "User",

"url": "https://api.github.com/users/lhoestq",

"user_view_type": "public"

} | {

"+1": 1,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 1,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7362/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7362/timeline | null | completed | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7361 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7361/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7361/comments | https://api.github.com/repos/huggingface/datasets/issues/7361/events | https://github.com/huggingface/datasets/pull/7361 | 2,771,859,244 | PR_kwDODunzps6G4t2p | 7,361 | Fix lock permission | {

"avatar_url": "https://avatars.githubusercontent.com/u/11530592?v=4",

"events_url": "https://api.github.com/users/cih9088/events{/privacy}",

"followers_url": "https://api.github.com/users/cih9088/followers",

"following_url": "https://api.github.com/users/cih9088/following{/other_user}",

"gists_url": "https://api.github.com/users/cih9088/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/cih9088",

"id": 11530592,

"login": "cih9088",

"node_id": "MDQ6VXNlcjExNTMwNTky",

"organizations_url": "https://api.github.com/users/cih9088/orgs",

"received_events_url": "https://api.github.com/users/cih9088/received_events",

"repos_url": "https://api.github.com/users/cih9088/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/cih9088/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/cih9088/subscriptions",

"type": "User",

"url": "https://api.github.com/users/cih9088",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-07T04:15:53" | "2025-01-07T04:49:46" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | All files except lock file have proper permission obeying `ACL` property if it is set.

If the cache directory has `ACL` property, it should be respected instead of just using `umask` for permission.

To fix it, just create a lock file and pass the created `mode`.

By creating a lock file with `touch()` before `FileLock` create it with `mode`,

- if `ACL` is not set, same as before

- if `ACL` is set, `ACL` is respected

If it is acceptable, it should be also applied to [`huggingface_hub`](https://github.com/huggingface/huggingface_hub/blob/2702ec2a2bd0124cc1fddfd72ccb1297b2478148/src/huggingface_hub/utils/_fixes.py#L95) I guess. | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7361/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7361/timeline | null | null | 0 | {

"diff_url": "https://github.com/huggingface/datasets/pull/7361.diff",

"html_url": "https://github.com/huggingface/datasets/pull/7361",

"merged_at": null,

"patch_url": "https://github.com/huggingface/datasets/pull/7361.patch",

"url": "https://api.github.com/repos/huggingface/datasets/pulls/7361"

} | true |

https://api.github.com/repos/huggingface/datasets/issues/7360 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7360/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7360/comments | https://api.github.com/repos/huggingface/datasets/issues/7360/events | https://github.com/huggingface/datasets/issues/7360 | 2,771,751,406 | I_kwDODunzps6lNZHu | 7,360 | error when loading dataset in Hugging Face: NoneType error is not callable | {

"avatar_url": "https://avatars.githubusercontent.com/u/189343338?v=4",

"events_url": "https://api.github.com/users/nanu23333/events{/privacy}",

"followers_url": "https://api.github.com/users/nanu23333/followers",

"following_url": "https://api.github.com/users/nanu23333/following{/other_user}",

"gists_url": "https://api.github.com/users/nanu23333/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/nanu23333",

"id": 189343338,

"login": "nanu23333",

"node_id": "U_kgDOC0kmag",

"organizations_url": "https://api.github.com/users/nanu23333/orgs",

"received_events_url": "https://api.github.com/users/nanu23333/received_events",

"repos_url": "https://api.github.com/users/nanu23333/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/nanu23333/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/nanu23333/subscriptions",

"type": "User",

"url": "https://api.github.com/users/nanu23333",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-07T02:11:36" | "2025-01-10T10:44:38" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

I met an error when running a notebook provide by Hugging Face, and met the error.

```

---------------------------------------------------------------------------

TypeError Traceback (most recent call last)

Cell In[2], line 5

3 # Load the enhancers dataset from the InstaDeep Hugging Face ressources

4 dataset_name = "enhancers_types"

----> 5 train_dataset_enhancers = load_dataset(

6 "InstaDeepAI/nucleotide_transformer_downstream_tasks_revised",

7 dataset_name,

8 split="train",

9 streaming= False,

10 )

11 test_dataset_enhancers = load_dataset(

12 "InstaDeepAI/nucleotide_transformer_downstream_tasks_revised",

13 dataset_name,

14 split="test",

15 streaming= False,

16 )

File /public/home/hhl/miniconda3/envs/transformer/lib/python3.9/site-packages/datasets/load.py:2129, in load_dataset(path, name, data_dir, data_files, split, cache_dir, features, download_config, download_mode, verification_mode, keep_in_memory, save_infos, revision, token, streaming, num_proc, storage_options, trust_remote_code, **config_kwargs)

2124 verification_mode = VerificationMode(

2125 (verification_mode or VerificationMode.BASIC_CHECKS) if not save_infos else VerificationMode.ALL_CHECKS

2126 )

2128 # Create a dataset builder

-> 2129 builder_instance = load_dataset_builder(

2130 path=path,

2131 name=name,

2132 data_dir=data_dir,

2133 data_files=data_files,

2134 cache_dir=cache_dir,

2135 features=features,

2136 download_config=download_config,

2137 download_mode=download_mode,

2138 revision=revision,

2139 token=token,

2140 storage_options=storage_options,

2141 trust_remote_code=trust_remote_code,

2142 _require_default_config_name=name is None,

2143 **config_kwargs,

2144 )

2146 # Return iterable dataset in case of streaming

2147 if streaming:

File /public/home/hhl/miniconda3/envs/transformer/lib/python3.9/site-packages/datasets/load.py:1886, in load_dataset_builder(path, name, data_dir, data_files, cache_dir, features, download_config, download_mode, revision, token, storage_options, trust_remote_code, _require_default_config_name, **config_kwargs)

1884 builder_cls = get_dataset_builder_class(dataset_module, dataset_name=dataset_name)

1885 # Instantiate the dataset builder

-> 1886 builder_instance: DatasetBuilder = builder_cls(

1887 cache_dir=cache_dir,

1888 dataset_name=dataset_name,

1889 config_name=config_name,

1890 data_dir=data_dir,

1891 data_files=data_files,

1892 hash=dataset_module.hash,

1893 info=info,

1894 features=features,

1895 token=token,

1896 storage_options=storage_options,

1897 **builder_kwargs,

1898 **config_kwargs,

1899 )

1900 builder_instance._use_legacy_cache_dir_if_possible(dataset_module)

1902 return builder_instance

TypeError: 'NoneType' object is not callable

```

I have checked my internet, it worked well. And the dataset name was just copied from the Hugging Face.

Totally no idea what is wrong!

### Steps to reproduce the bug

To reproduce the bug you may run

```

from datasets import load_dataset, Dataset

# Load the enhancers dataset from the InstaDeep Hugging Face ressources

dataset_name = "enhancers_types"

train_dataset_enhancers = load_dataset(

"InstaDeepAI/nucleotide_transformer_downstream_tasks_revised",

dataset_name,

split="train",

streaming= False,

)

test_dataset_enhancers = load_dataset(

"InstaDeepAI/nucleotide_transformer_downstream_tasks_revised",

dataset_name,

split="test",

streaming= False,

)

```

### Expected behavior

1. what may be the reasons of the error

2. how can I fine which reason lead to the error

3. how can I save the problem

### Environment info

```

- `datasets` version: 3.2.0

- Platform: Linux-5.15.0-117-generic-x86_64-with-glibc2.31

- Python version: 3.9.21

- `huggingface_hub` version: 0.27.0

- PyArrow version: 18.1.0

- Pandas version: 2.2.3

- `fsspec` version: 2024.9.0

``` | null | {

"+1": 0,

"-1": 0,

"confused": 0,

"eyes": 0,

"heart": 0,

"hooray": 0,

"laugh": 0,

"rocket": 0,

"total_count": 0,

"url": "https://api.github.com/repos/huggingface/datasets/issues/7360/reactions"

} | https://api.github.com/repos/huggingface/datasets/issues/7360/timeline | null | null | null | null | false |

https://api.github.com/repos/huggingface/datasets/issues/7359 | https://api.github.com/repos/huggingface/datasets | https://api.github.com/repos/huggingface/datasets/issues/7359/labels{/name} | https://api.github.com/repos/huggingface/datasets/issues/7359/comments | https://api.github.com/repos/huggingface/datasets/issues/7359/events | https://github.com/huggingface/datasets/issues/7359 | 2,771,137,842 | I_kwDODunzps6lLDUy | 7,359 | There are multiple 'mteb/arguana' configurations in the cache: default, corpus, queries with HF_HUB_OFFLINE=1 | {

"avatar_url": "https://avatars.githubusercontent.com/u/723146?v=4",

"events_url": "https://api.github.com/users/Bhavya6187/events{/privacy}",

"followers_url": "https://api.github.com/users/Bhavya6187/followers",

"following_url": "https://api.github.com/users/Bhavya6187/following{/other_user}",

"gists_url": "https://api.github.com/users/Bhavya6187/gists{/gist_id}",

"gravatar_id": "",

"html_url": "https://github.com/Bhavya6187",

"id": 723146,

"login": "Bhavya6187",

"node_id": "MDQ6VXNlcjcyMzE0Ng==",

"organizations_url": "https://api.github.com/users/Bhavya6187/orgs",

"received_events_url": "https://api.github.com/users/Bhavya6187/received_events",

"repos_url": "https://api.github.com/users/Bhavya6187/repos",

"site_admin": false,

"starred_url": "https://api.github.com/users/Bhavya6187/starred{/owner}{/repo}",

"subscriptions_url": "https://api.github.com/users/Bhavya6187/subscriptions",

"type": "User",

"url": "https://api.github.com/users/Bhavya6187",

"user_view_type": "public"

} | [] | open | false | null | [] | null | [] | "2025-01-06T17:42:49" | "2025-01-06T17:43:31" | 1970-01-01T00:00:00 | NONE | {

"completed": 0,

"percent_completed": 0,

"total": 0

} | null | ### Describe the bug

Hey folks,

I am trying to run this code -

```python

from datasets import load_dataset, get_dataset_config_names

ds = load_dataset("mteb/arguana")

```

with HF_HUB_OFFLINE=1

But I get the following error -

```python

Using the latest cached version of the dataset since mteb/arguana couldn't be found on the Hugging Face Hub (offline mode is enabled).

---------------------------------------------------------------------------

ValueError Traceback (most recent call last)

Cell In[2], line 1

----> 1 ds = load_dataset("mteb/arguana")

File ~/env/lib/python3.10/site-packages/datasets/load.py:2129, in load_dataset(path, name, data_dir, data_files, split, cache_dir, features, download_config, download_mode, verification_mode, keep_in_memory, save_infos, revision, token, streaming, num_proc, storage_options, trust_remote_code, **config_kwargs)

2124 verification_mode = VerificationMode(

2125 (verification_mode or VerificationMode.BASIC_CHECKS) if not save_infos else VerificationMode.ALL_CHECKS

2126 )

2128 # Create a dataset builder

-> 2129 builder_instance = load_dataset_builder(

2130 path=path,

2131 name=name,

2132 data_dir=data_dir,

2133 data_files=data_files,

2134 cache_dir=cache_dir,

2135 features=features,

2136 download_config=download_config,

2137 download_mode=download_mode,

2138 revision=revision,

2139 token=token,

2140 storage_options=storage_options,

2141 trust_remote_code=trust_remote_code,

2142 _require_default_config_name=name is None,

2143 **config_kwargs,

2144 )

2146 # Return iterable dataset in case of streaming

2147 if streaming:

File ~/env/lib/python3.10/site-packages/datasets/load.py:1886, in load_dataset_builder(path, name, data_dir, data_files, cache_dir, features, download_config, download_mode, revision, token, storage_options, trust_remote_code, _require_default_config_name, **config_kwargs)

1884 builder_cls = get_dataset_builder_class(dataset_module, dataset_name=dataset_name)

1885 # Instantiate the dataset builder

-> 1886 builder_instance: DatasetBuilder = builder_cls(

1887 cache_dir=cache_dir,

1888 dataset_name=dataset_name,

1889 config_name=config_name,

1890 data_dir=data_dir,

1891 data_files=data_files,

1892 hash=dataset_module.hash,

1893 info=info,

1894 features=features,

1895 token=token,

1896 storage_options=storage_options,

1897 **builder_kwargs,

1898 **config_kwargs,

1899 )

1900 builder_instance._use_legacy_cache_dir_if_possible(dataset_module)

1902 return builder_instance