metadata

tags:

- npu

- amd

- llama2

- RyzenAI

This model is finetuned webbigdata/ALMA-7B-Ja-V2 and AWQ quantized and converted to run on the NPU installed Ryzen AI PC, for example, Ryzen 9 7940HS Processor.

For set up Ryzen AI for LLMs in window 11, see Running LLM on AMD NPU Hardware.

The following sample assumes that the setup on the above page has been completed.

This model has only been tested on RyzenAI for Windows 11. It does not work in Linux environments such as WSL.

2024/07/30

- Ryzen AI Software 1.2 has been released. Please note that this model is based on Ryzen AI Software 1.1.

- amd/RyzenAI-SW 1.2 was announced on July 29, 2024. This sample for amd/RyzenAI-SW 1.1. Please note that the folder and script contents have been completely changed.

2024/08/04

- This model was created with the 1.1 driver, but it has been confirmed that it works with 1.2. Please check the setup for 1.2 driver.

setup for 1.1 driver

In cmd windows.

conda activate ryzenai-transformers

<your_install_path>\RyzenAI-SW\example\transformers\setup.bat

pip install transformers==4.34.0

# downgrade Transformers library will cause the LLama 3 sample to stop working.

# If you want to run LLama 3 or above, update to pip install transformers==4.43.3

pip install -U "huggingface_hub[cli]"

huggingface-cli download dahara1/ALMA-Ja-V3-amd-npu --revision main --local-dir ALMA-Ja-V3-amd-npu

copy <your_ryzen_ai-sw_install_path>\RyzenAI-SW\example\transformers\models\llama2\modeling_llama_amd.py .

# set up Runtime. see https://ryzenai.docs.amd.com/en/latest/runtime_setup.html

set XLNX_VART_FIRMWARE=<your_firmware_install_path>\voe-4.0-win_amd64\1x4.xclbin

set NUM_OF_DPU_RUNNERS=1

# save below sample script as utf8 and ALMA-Ja-V3-amd-npu-test.py

python ALMA-Ja-V3-amd-npu-test.py

Sample Script

import torch

import psutil

import transformers

from transformers import AutoTokenizer, set_seed

import qlinear

import logging

def translation(instruction, input):

system = """You are a highly skilled professional Japanese-English and English-Japanese translator. Translate the given text accurately, taking into account the context and specific instructions provided. Steps may include hints enclosed in square brackets [] with the key and value separated by a colon:. Only when the subject is specified in the Japanese sentence, the subject will be added when translating into English. If no additional instructions or context are provided, use your expertise to consider what the most appropriate context is and provide a natural translation that aligns with that context. When translating, strive to faithfully reflect the meaning and tone of the original text, pay attention to cultural nuances and differences in language usage, and ensure that the translation is grammatically correct and easy to read. After completing the translation, review it once more to check for errors or unnatural expressions. For technical terms and proper nouns, either leave them in the original language or use appropriate translations as necessary. Take a deep breath, calm down, and start translating."""

prompt = f"""{system}

### Instruction:

{instruction}

### Input:

{input}

### Response:

"""

tokenized_input = tokenizer(prompt, return_tensors="pt",

padding=True, max_length=1600, truncation=True)

terminators = [

tokenizer.eos_token_id,

]

outputs = model.generate(tokenized_input['input_ids'],

max_new_tokens=600,

eos_token_id=terminators,

attention_mask=tokenized_input['attention_mask'],

do_sample=True,

temperature=0.3,

top_p=0.5)

response = outputs[0][tokenized_input['input_ids'].shape[-1]:]

response_message = tokenizer.decode(response, skip_special_tokens=True)

return response_message

if __name__ == "__main__":

set_seed(123)

p = psutil.Process()

p.cpu_affinity([0, 1, 2, 3])

torch.set_num_threads(4)

transformers.logging.set_verbosity_error()

logging.disable(logging.CRITICAL)

tokenizer = AutoTokenizer.from_pretrained("ALMA-Ja-V3-amd-npu")

tokenizer.pad_token = tokenizer.eos_token

ckpt = r"ALMA-Ja-V3-amd-npu\alma_w_bit_4_awq_fa_amd.pt"

model = torch.load(ckpt)

model.eval()

model = model.to(torch.bfloat16)

for n, m in model.named_modules():

if isinstance(m, qlinear.QLinearPerGrp):

print(f"Preparing weights of layer : {n}")

m.device = "aie"

m.quantize_weights()

print(translation("Translate Japanese to English.", "面白きこともなき世を面白く住みなすものは心なりけり"))

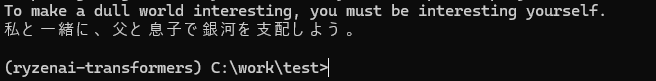

print(translation("Translate English to Japanese.", "Join me, and together we can rule the galaxy as father and son."))

Acknowledgements

- amd/RyzenAI-SW

Sample Code and Drivers. - mit-han-lab/llm-awq

Thanks for AWQ quantization Method. - meta-llama/Llama-2-7b-hf

Built with Meta Llama 2

Llama 2 is licensed under the Llama 2 Community License, Copyright © Meta Platforms, Inc. All Rights Reserved.