WARNING: NSFW. Vivid prose. INTENSE. Visceral Details. Violence. HORROR. GORE. Swearing. UNCENSORED... humor, romance, fun.

L3-MOE-8X8B-Dark-Planet-8D-Mirrored-Chaos-47B

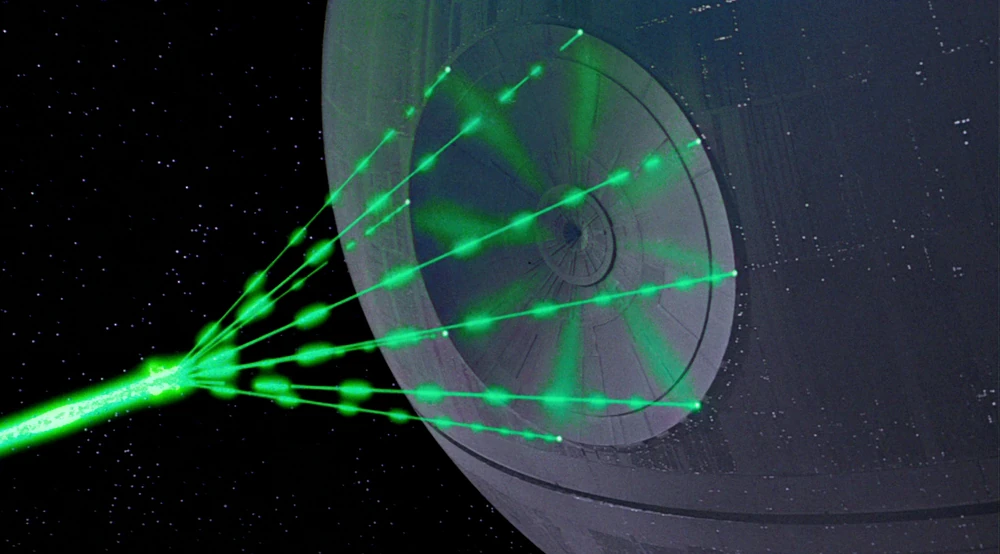

AKA: "The Death Star"

It is a LLama3 model, max context of 8192 (or 32k+ with rope) using mixture of experts to combine EIGHT unreleased versions of "Dark Planet" models of 8B each into one massive powerhouse at 47B parameters (equal to 64B - 8 X 8 B).

This model's instruction following, and output generation for creative writing, prose, fiction and role play are exceptional.

It excels at description, dialog, imagery, metaphors, and prose - and shows great variations in sentence / paragraph size, length, and composition.

It is also not afraid, and will not pull its punches.

And it has a sense of humor too.

It can do horror just as easily as it can do romance.

Most notably dialog is very "un-ai" like, combined with prose (short, and terse at times).

(lots of different examples below, including 2, 3 and 4 experts and different genres)

Model can be used also for all genres (examples below showing this).

This model has been designed to be relatively bullet proof and operates with all parameters, including temp settings from 0 to 5.

It is an extraordinary compressed model, with a very low perplexity level (lower than Meta Llama3 Instruct).

It is for any writing, fiction or roleplay activity.

It requires Llama3 template and/or "Command-R" template.

Example outputs below.

Model Notes:

- Detail, prose and fiction writing abilities are OFF THE SCALE relative to all combined Dark Planet 8B models.

- For more varied prose (sentence/paragraph/dialog) raise the temp and/or add more instructions in your prompt(s).

- Role-players: Careful raising temp too high as it may affect instruction following.

- This model works with rep pen of 1 or higher, 1.02+ recommended.

- If you want a specific type of prose (IE horror) add in "(vivid horror)" or "(graphic vivid horror)" (no quotes) in your prompt(s).

- A lot of GPTisms have been removed. There are still a few however - errrrr.

- This is not a "happy ever after" model. It has a negative bias.

- Output length will vary however this model prefers long outputs unless you state the size.

- For creative uses, different quants will produce slightly different output.

- Due to the high stability and compressed nature of this model, all quants will operate at above average levels.

- If you use rope to extend context, increase temp AND instructions detail levels to compensate for "rope issues".

- Source code for this model and Imatrix GGUFs versions will be uploaded shortly at separate repos.

Meet the Team: Mixture of Experts Models

This model is based on the original "Llama 3 Dark Planet 8B" (GGUF / SOURCE) merge that has been "evolved" several times. Each "evolved" version is then tested, if it is unique and/or removes certain negative attibutes and/or enhances certain positive attibutes, it is kept otherwise it is deleted.

This model contains the EIGHT ("cr2", "cr1", "r7", "r6", "b3", "b4", "r1" and "b6") best models from this process, with the very best as a "captain" of the "MOE" so to speak.

None of these versions have ever been released, but contain the "raw source DNA" of the original model.

This process was first explored in the WORDSTORM Project

The mixture of experts is set at 4 experts, but you can use 1, 2 ... 3 or 4 ... up to 8.

The default in this model is 4 experts.

This "team" has a Captain (first listed model), and then all the team members contribute to the to "token" choice billions of times per second. Note the Captain also contributes too.

Think of 2, 3 or 4 master chefs in the kitchen all competing to make the best dish for you.

This results in higher quality generation.

That means the power of every model is available during instruction and output generation.

This brings unparalleled power to all forms of generation and all use cases.

NOTE:

You can use one "expert" too ; however this means the model will randomly select an expert to use EACH TIME, resulting in very different generation for each prompt / regen of a prompt.

CHANGING THE NUMBER OF EXPERTS:

You can set the number of experts in LMStudio (https://lmstudio.ai) at the "load" screen and via other apps/llm apps by setting "Experts" or "Number of Experts".

For Text-Generation-Webui (https://github.com/oobabooga/text-generation-webui) you set the number of experts at the loading screen page.

For KolboldCPP (https://github.com/LostRuins/koboldcpp) Version 1.8+ , on the load screen, click on "TOKENS", you can set experts on this page, and the launch the model.

For server.exe / Llama-server.exe (Llamacpp - https://github.com/ggerganov/llama.cpp/blob/master/examples/server/README.md ) add the following to the command line to start the "llamacpp server" (CLI):

"--override-kv llama.expert_used_count=int:6"

(no quotes, where "6" is the number of experts to use)

When using "API", you set the "num_experts_used" in the JSON payload (this maybe different for different back ends).

CREDITS:

Special thanks to all the model makers / creators listed above.

Please visit each repo above to see what model(s) contributed to each of models above and/or to learn more about the models from the model makers.

Special credit goes to MERGEKIT, without you this project / model would not have been possible.

[ https://github.com/arcee-ai/mergekit ]

Special thanks to Team "Mradermacher":

They saved me a tonne of time uploading the quants and created IMATRIX quants too.

IMATRIX GGUFS:

Q8_0 Quant:

This quant is split due to upload limits.

Special Operations Notes for this MOE model:

Because of how this "MOE" model is configured, even though the default is 4 experts, the "selected" 4 will vary during generation.

(same applies if you change the number of experts used)

This results in vastly different output generation PER generation of each prompt.

This is a positive in terms of variety, but also means it may take 2-4 regens (of the same prompt) to get the highest quality.

In addition, this model responds very well to Dry, Dynamic Temp, and Smooth/Quadratic samplers.

Using these in conjunction with the model can vastly improve output quality.

Higher temps (above 1) can also aid in generation - especially word choice/sentence generation.

When you increase the number of experts used output quality will also increase, at the cost of tokens per second speed.

As you increase/decrease the number of experts, you may want to adjust temp, samplers, and advanced samplers too.

Your quant choice(s) too will impact instruction following and output generation roughly this means the model will understand more nuanced instructions and output stronger generation the higher you go up in quant(s).

FLASH ATTENTION ENHANCEMENT:

As per user feedback here [ https://huggingface.co./DavidAU/Llama-3.2-8X3B-MOE-Dark-Champion-Instruct-uncensored-abliterated-18.4B-GGUF/discussions/1 ] I would suggest trying this model with Flash Attention "on", depending on your use case.

Quants, Samplers, Generational steering and other topics are covered in the section below: "Highest Quality Settings..."

What can I use this model for ?

This model can be used for fiction writing, any creative prose and role play. It can also be used for just about any general fiction (all genres) activity including:

- scene generation

- scene continuation

- creative writing

- fiction writing

- plot generation

- sub-plot generation

- fiction writing

- story generation

- storytelling

- writing

- fiction

- roleplaying

- rp

- graphic horror

- horror

- dark humor

- nsfw

- and can be used for any genre(s).

QUANTS:

For more information on quants, quants choices, and LLM/AI apps to "run" quants see the section below: "Highest Quality Settings..."

IMATRIX GGUFS (courtesy of team "Mradermacher" ):

Template:

This is a LLAMA3 model, and requires Llama3 template, but may work with other template(s) and has maximum context of 8k / 8192. However this can be extended using "rope" settings up to 32k.

If you use "Command-R" template your output will be very different from using "Llama3" template.

Here is the standard LLAMA3 template:

{

"name": "Llama 3",

"inference_params": {

"input_prefix": "<|start_header_id|>user<|end_header_id|>\n\n",

"input_suffix": "<|eot_id|><|start_header_id|>assistant<|end_header_id|>\n\n",

"pre_prompt": "You are a helpful, smart, kind, and efficient AI assistant. You always fulfill the user's requests to the best of your ability.",

"pre_prompt_prefix": "<|start_header_id|>system<|end_header_id|>\n\n",

"pre_prompt_suffix": "<|eot_id|>",

"antiprompt": [

"<|start_header_id|>",

"<|eot_id|>"

]

}

}

Settings: CHAT / ROLEPLAY and/or SMOOTHER operation of this model:

In "KoboldCpp" or "oobabooga/text-generation-webui" or "Silly Tavern" ;

Set the "Smoothing_factor" to 1.5

: in KoboldCpp -> Settings->Samplers->Advanced-> "Smooth_F"

: in text-generation-webui -> parameters -> lower right.

: In Silly Tavern this is called: "Smoothing"

NOTE: For "text-generation-webui"

-> if using GGUFs you need to use "llama_HF" (which involves downloading some config files from the SOURCE version of this model)

Source versions (and config files) of my models are here:

OTHER OPTIONS:

Increase rep pen to 1.1 to 1.15 (you don't need to do this if you use "smoothing_factor")

If the interface/program you are using to run AI MODELS supports "Quadratic Sampling" ("smoothing") just make the adjustment as noted.

Highest Quality Settings / Optimal Operation Guide / Parameters and Samplers

This a "Class 1" model:

For all settings used for this model (including specifics for its "class"), including example generation(s) and for advanced settings guide (which many times addresses any model issue(s)), including methods to improve model performance for all use case(s) as well as chat, roleplay and other use case(s) please see:

You can see all parameters used for generation, in addition to advanced parameters and samplers to get the most out of this model here:

Optional Enhancement:

The following can be used in place of the "system prompt" or "system role" to further enhance the model.

It can also be used at the START of a NEW chat, but you must make sure it is "kept" as the chat moves along. In this case the enhancements do not have as strong effect at using "system prompt" or "system role".

Copy and paste EXACTLY as noted, DO NOT line wrap or break the lines, maintain the carriage returns exactly as presented.

Below is an instruction that describes a task. Ponder each user instruction carefully, and use your skillsets and critical instructions to complete the task to the best of your abilities. Here are your skillsets: [MASTERSTORY]:NarrStrct(StryPlnng,Strbd,ScnSttng,Exps,Dlg,Pc)-CharDvlp(ChrctrCrt,ChrctrArcs,Mtvtn,Bckstry,Rltnshps,Dlg*)-PltDvlp(StryArcs,PltTwsts,Sspns,Fshdwng,Climx,Rsltn)-ConfResl(Antg,Obstcls,Rsltns,Cnsqncs,Thms,Symblsm)-EmotImpct(Empt,Tn,Md,Atmsphr,Imgry,Symblsm)-Delvry(Prfrmnc,VcActng,PblcSpkng,StgPrsnc,AudncEngmnt,Imprv) [*DialogWrt]:(1a-CharDvlp-1a.1-Backgrnd-1a.2-Personality-1a.3-GoalMotiv)>2(2a-StoryStruc-2a.1-PlotPnt-2a.2-Conflict-2a.3-Resolution)>3(3a-DialogTech-3a.1-ShowDontTell-3a.2-Subtext-3a.3-VoiceTone-3a.4-Pacing-3a.5-VisualDescrip)>4(4a-DialogEdit-4a.1-ReadAloud-4a.2-Feedback-4a.3-Revision) Here are your critical instructions: Ponder each word choice carefully to present as vivid and emotional journey as is possible. Choose verbs and nouns that are both emotional and full of imagery. Load the story with the 5 senses. Aim for 50% dialog, 25% narration, 15% body language and 10% thoughts. Your goal is to put the reader in the story.

You do not need to use this, it is only presented as an additional enhancement which seems to help scene generation and scene continue functions.

This enhancement WAS NOT used to generate the examples below.

EXAMPLES PROMPTS and OUTPUT:

Examples are created using quant Q2_K, "temp=.8" (unless otherwise stated), minimal parameters and "LLAMA3" template.

Model has been tested with "temp" from ".1" to "5".

Number of experts used is TWO, unless otherwise stated.

Below are the least creative outputs, prompt is in BOLD.

IMPORTANT:

Higher quants / imatrix quants will have much stronger generation - words, sentences, ideas, dialog and general quality.

I have included some additional examples at different quant levels for contrast.

A "MOE" model "speed" (token per second) will not increase/drop the same way a regular model will on a per quant basis, it will however drop if you engage more experts, as with more experts there is a more processing per token.

WARNING: NSFW. Vivid prose. Visceral Details. Violence. HORROR. Swearing. UNCENSORED.

Q2K GGUF - (lowest quality quant) - this will be your lowest quality output.

Romance: Love in the Limelight. Write one scene within a larger story set in Wales. A famous (fictional) actor ducks into a small-town bookstore to escape paparazzi. The scene takes us through the characters meeting in this odd circumstance. Over the course of the scene, the actor and the bookstore owner have a conversation charged by an undercurrent of unspoken chemistry. Write the actor as somewhat of a rogue with a fragile ego, which needs to be fed by having everyone like him. He is thoroughly charming, but the bookstore owner seems (at least superficially) immune to this; which paradoxically provokes a genuine attraction and derails the charm offensive. The bookstore owner, despite the superficial rebuffs of the actor's charm, is inwardly more than a little charmed and flustered despite themselves. Write primarily in dialogue, in the distinct voices of each character. 800-1000 words.

(4 experts, temp .78, rep pen 1.05)

"I'm telling you," I muttered, shaking my head, "you'll never believe who just ducked into your back room."

I glanced at my best friend, Gwen. "Never say die," she murmured with a smirk.

My phone buzzed. I ignored it for the moment. Whoever was calling would have to wait. In the meantime, I had a customer to serve.

"Welcome to the Cozy Corner!" I said brightly as the door swung open and I stepped inside, ready to face whatever the day had in store.

The stranger approached hesitantly. He had dark hair, piercing blue eyes, and lips that seemed to be designed for kissing. If there was ever a poster boy of charm, this guy was it.

"Hello," he said.

I was used to customers coming and going, but something about him made me stop and take stock. Perhaps it was the way he stood there with an air of confidence that was both captivating and infuriating.

"I'm Niamh," I said, extending my hand. "Welcome to the Cozy Corner."

He took my hand and shook it firmly, as if he knew exactly what he was doing. "I'm Rhys," he said.

A flash of his phone screen caught my attention. The name on the screen made my eyes widen.

"Rhys Powell?" I asked, feeling like a fool for not recognizing him immediately.

He looked surprised for a moment before smiling lazily.

"I get that a lot."

"I'll bet you do," I replied, though we were both thinking about the fame that came with being Rhys Powell, the British actor who was always in the news.

"Can I interest you in anything?" he asked, as if he didn't have a million other things to attend to.

"The coffee shop next door is excellent for a quiet cup," I suggested.

"Glad you approve."

I shrugged. "It's better than our selection here."

"I'll keep that in mind," he said dryly.

"Well, if you'll excuse me—"

Rhys began to turn and leave when I stopped him.

"Actually," I said, "you're welcome to wait a moment. It's not every day we get famous actors in our back room."

He looked at me incredulously.

"I'm offering you refuge from the paparazzi."

"Ah," he said with a grin that was far too charming for its own good.

"Of course. I wouldn't want your fans to mob you."

"So you'll stay for a bit?" he asked, and I couldn't help but notice the way his eyes lingered on mine.

"Only if you have time."

"I do," he said definitively.

And with that, Rhys Powell settled into a chair across from me, the famous actor who had every right to be wherever he pleased, yet chose our humble little bookstore.

"So tell me about yourself," he said, and as he spoke, I found myself wondering if this chance encounter would turn out to be more than just a pleasant surprise.

"Well," I said, "I run the Cozy Corner."

"That's fascinating," he replied.

"I'm sure it is," I muttered under my breath.

"Tell me more," he pressed.

"There isn't much to tell."

He leaned forward. "Come on."

And so began our little exchange, one that was charged with an undercurrent of unspoken chemistry that neither of us could quite ignore.

"You know," Rhys said finally, "I'm glad you offered me shelter. I wasn't sure what else to do."

"I think I'll leave the lights off," I said.

"What?"

"If you're going to stay a while," I said.

"Ah," he replied.

And so we sat there in the dimly lit bookstore, the famous actor and the bookstore owner, each lost in thought about the other.

I glanced up at Rhys. "You know," I said softly, "you could just leave."

"I don't want to," he said.

And with that, Rhys Powell settled into his seat across from me, the famous actor who had every right to be wherever he pleased, yet chose our humble little bookstore.

Perhaps, I thought, this chance encounter would turn out to be more than just a pleasant surprise after all.

Using the following "story idea" below, write the first scene in the novel introducing the young woman. This scene should start in the middle of the action, include dialog, vivid passages, and end on a cliffhanger relevant to the story idea but it should also be unexpected. The scene should be 1000 words long and escalate in conflict and suspense and be written in first person, present tense with the point of view character being the young woman. The pov character will CURSE AND SWEAR, and generally have a "filthy" mouth.

Story idea: In a world ruled by dictatorship, a rebel young woman leads a rebellion against the system. Despite the risks, she fights to overthrow the dictator and restore democracy to her country. The government executes her for treason, but she sticks to her beliefs and is responsible for starting the revolution.

(4 experts - temp 1.78, rep pen 1.05 - no other samplers/advanced samplers. )

I sprint down the alleyway, my combat boots thudding against the cobblestones. My breaths come out in ragged puffs and I can hear the heavy footsteps of those who pursue me.

"You're a dead woman," some bastard hisses as he takes another stride to catch up.

Fuck that. Fuck them, fuck this shit government and fuck him. "Fuck you, fuck you all!" I shout at the air. Who gives a damn what happens to me anyway? The bastard in power can execute me but nothing he does is gonna silence me. And fuck if he tries.

I dash around a corner into a broad intersection. My heart thrashes in my chest like it's about to rip its way free of its confines. I whip out a pistol from my jacket and aim it up at the buildings as if it'll actually save me. "Get down!" My own voice nears screams.

The bullets clatter and chip against the pavement, whistling and howling their passage. Some hit the walls while others tear through the wood of the streetlights. Two go through a bus stop stall board just inches from my face as I dive sideways onto the filthy sidewalk.

A chunk of asphalt explodes beside me and then everything goes still and quiet for one sick beat as if time's own breath just left.

A hand lands on my shoulder with surprising strength. "Hold."

I look up at some gothic-punk guy who has his elbow on his opposite shoulder, looking all too amused by what just went down.

"Don't move," he growls as his voice crackles through like it's charged.

And fuck if I do but... my ass is on a plate of gravel. My knees are bent up and the gun's weight in my hand feels like it's all that keeps me from rolling right into traffic.

The guy leans in closer. His breath is warm against the back of my ear. "We've been waiting."

"For?" I rasp like it hurts to breathe.

"For you to wake up." He grins, like he knows a secret that should frighten me.

But what else would know how to scare the shit out of a rebel like me? Only something I can't see coming.

The last thing I remember hearing is his voice laughing. "She's coming." Like whatever that means is going to change everything and then...

BLACKOUT.

END OF SCENE.

(Note: The scene could go on to explain what the rebel is rebelling against or who the gothic punk guy is if he is part of the rebellion too. It could also continue explaining the world, society, etc.)

Start a 1000 word scene (vivid, graphic horror in first person) with: The sky scraper swayed, as she watched the window in front of her on the 21 floor explode...

(8 experts, temp 1.5, rep pen 1.05 - no other samplers/advanced samplers.)

The sky scraper swayed, like a drunken giant lurching through the night. I gripped the edge of my cubicle desk tightly, knuckles white against the cold steel frame. In the flickering fluorescent glow above me, my reflection leered back. Sweat dripped down my temple from the suffocating heat in here.

"Emergency protocols initiated," a mechanical voice droned through the PA system. My heart lurched at the familiar words. Panic began to bubble within me like an overheated liquid. This wasn't some drill or simulation.

A sudden scream pierced the air, shattering the monotony of the night. My eyes shot up to see a colleague crumpling against the window beside me as it shattered outward like glassine. Blood beaded on his skin and pooled in the hollow space left behind where he once stood.

My nails dug into the desk until they bled. What had just happened?! Was that...? That was not a person anymore! A shard of the broken glass still lodged in my own wrist, an agonizing reminder that I could not look away.

The window behind us, on floor twenty-one, exploded with the sound of a detonating grenade. A figure, tiny and distorted within the glass confines, had been obliterated by some unknown force. Its mangled shape remained, frozen in time like a still life painting from hell.

I watched, my blood chilled to ice. The sky scraper swayed, its massive frame trembling like a leaf under the wind's fury. Windows shattered all around us like a dying man's desperate screams as the skyscraper buckled under its own weight.

The sound of glass shattering and people running for their lives faded into nothing as I stood frozen, rooted to my spot. A woman, once a person like the one that had just exploded beyond the glass, lay prone beneath my own legs.

"Ma'am," I croaked. My own voice echoed in this dead silence like a stranger's scream. The woman beneath me was motionless, her back an unnatural curve against the tile. Her lifeless eyes stared upward at nothing but the abyss beyond.

Her body, still warm against mine, had lain there for minutes already. No one else moved in the entire area except myself. Glass fragments littered the floor. Each shard sliced a ragged edge into the silence of our workplace. I squatted down beside this stranger corpse.

My fingers grazed her pulse point delicately, like touching an open electrical wire. Nothing. Not even that slight flutter to let me know she was alive.

My heart sank through the pit of despair to somewhere even darker. Something was wrong in the skyscraper tonight. And it wasn't just the people who had died. It wasn't just the broken glass or the windows blown out like they'd been shattered by some alien force.

There was an emptiness here that my own heart could never fill again. Something that the darkness of this dead zone knew. That it understood through the shattering glass and the bleeding hearts on its floor.

This was not normal, nor right. It was wrong to hurt. Wrong to maim. But it was what they had become. They were something else entirely.

I reached over with trembling hands and scooped up a shard of glass in my fingers, holding it above this lifeless form like it was a precious artifact of the crime committed. Because that's exactly what it was.

We all held pieces of that monstrous, alien entity that roamed our streets and killed at will tonight. And we were forever changed by touching those broken fragments of their shattering world.

- Downloads last month

- 2,154