Model Card for gpt2-124M-edu-fineweb-10B

A 124M parameter GPT2 model trained with the 10B fineweb-edu dataset using https://github.com/karpathy/llm.c

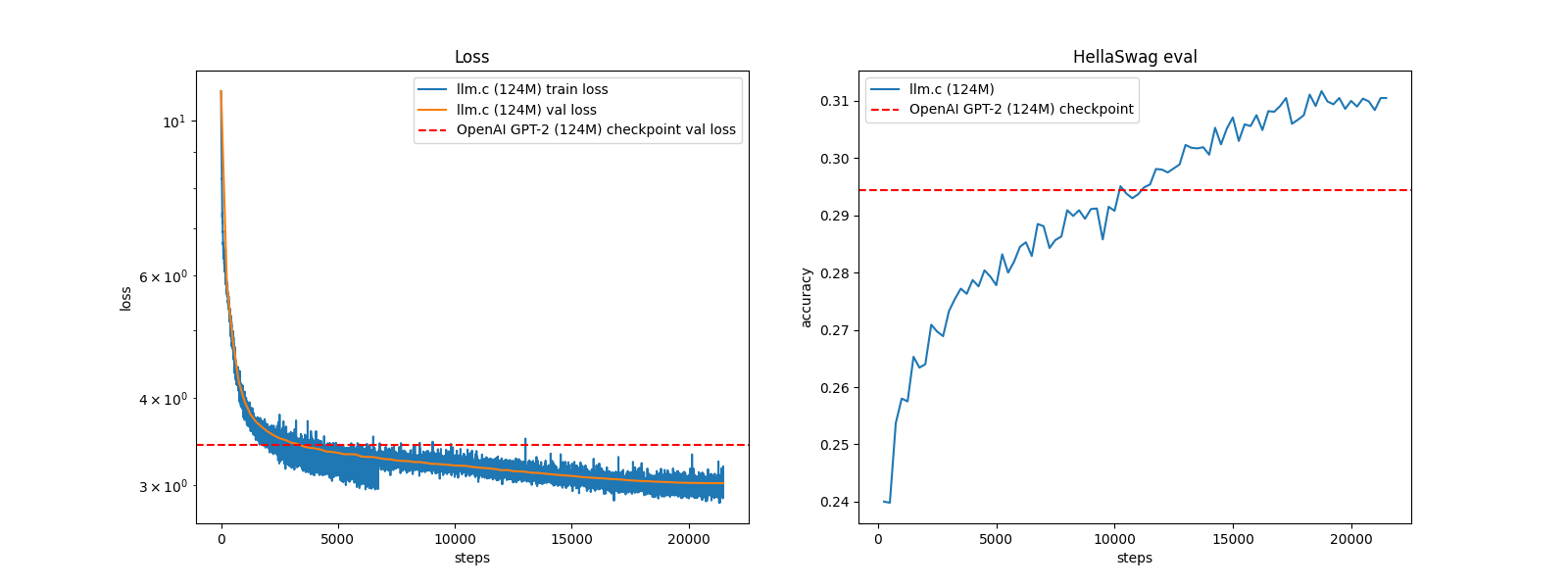

Training took 20 hours on a single 4090 GPU (limited to 350W), giving the following graphs:

Training

The training parameters where:

./train_gpt2cu \

-i "dev/data/edu_fineweb10B/edu_fineweb_train_*.bin" \

-j "dev/data/edu_fineweb10B/edu_fineweb_val_*.bin" \

-o log124M \

-e "d12" \

-b 56 -t 1024 \

-d 458752 \

-r 1 \

-z 1 \

-c 0.1 \

-l 0.002 \

-q 0.0 \

-u 700 \

-n 5000 \

-v 250 -s 20000 \

-h 1

The model has had no further finetuning.

Evaluation

Evals using Eleuther AI Harness as described in the open_llm_leaderboard and comparing with those published for openai-community/gpt2

| Eval Test | Score |

|---|---|

| arc_challenge (25 shot) | 24.49 |

| gsm8k (5 shot) | 0.08 |

| hellaswag (10 shot) | 32.64 |

| mmlu (5 shot) | 26.06 |

| truthfulqa (0 shot) | 42.45 |

| winogrande (5 shot) | 52.17 |

| Overall Score | 29.65 |

- Downloads last month

- 61

Inference Providers

NEW

This model is not currently available via any of the supported Inference Providers.